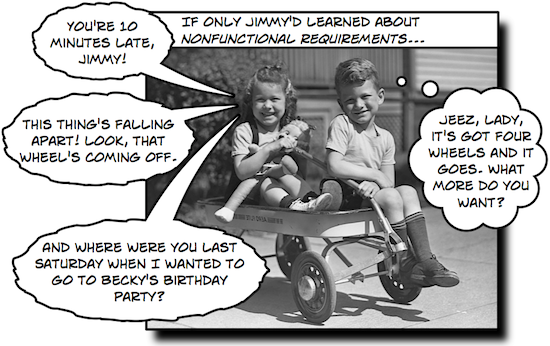

Understanding nonfunctional requirements — which some people call software quality attributes or nonbehavioral requirements — can make a big difference when you’re building software. But a lot of people have trouble taking a somewhat theoretical idea and applying it to a real-life project. Luckily, we’ve got an easy, practical technique to use nonfunctional requirements on a real software project.

How well does your program do… well, whatever it does?

I’ve wanted to write a post about nonfunctional requirements for a while. But I’ve been trying to find a good angle for talking about them, because while they can be really practical and useful on a software project, it’s a little hard to get that practicality across in a useful way.

Luckily, I’ve been spending a lot of time lately talking about architecture, since Jenny and I are going to give our Beautiful Teams talk at the ITARC New York conference next week. And that’s got me thinking a lot about how architects work. I’ve been asked more than once recently about what, exactly, the term “architecture” refers to. The quick answer is the textbook definition — designing, documenting and verifying the structure, components and properties of a system. But I always want to go beyond that. Any time I come across an interesting idea (and software architecture is full of them!), I try to come up with a way that it can help a developer out today, on a project that developer is working on. In fact, I’ve got a quick technique to help you do exactly that — it’s at the end of this post. And like many great software practices, it involves index cards.

So I started thinking about some common problems that software architects run into, especially junior ones who are still building up their experience. And that leads me straight to a problem that I’ve seen over and over again. A lot of people jump into architecture and design by starting with the question, “What’s this system going to do?” We’ve got a lot of very useful tools for that (like user stories and use cases). Obviously, you can’t design a system well without understanding what it does.

But I’ve had the opportunity to work with some very talented, very experienced software architects lately, and I’ve noticed something critically different about how they approach designing a system, and it’s really pointed me to an important difference that separates a senior architect from a junior one. When one of these guys gets started on a system, they don’t just think about what it’s going to do. They also think about how it’s going to do whatever it does.

That’s a really subtle point, and it’s a very easy one to overlook. But it’s very important. Important enough, in fact, to have a name: nonfunctional requirements.

Most of us have read about nonfunctional requirements. In fact, it’s a pretty common interview question: “Name a few nonfunctional requirements.” Someone who took a class in software architecture recently might be able to rattle a few of them off (usability, reliability, performance, scalability, availability…). And lots of project managers and business analysts I talk seem to be on an eternal quest for the perfect nonfunctional requirements template.

If you’re not familiar with nonfunctional requirements, here’s how Jenny and I defined them in our first book:

Users have implicit expectations about how well the software will work. These characteristics include how easy the software is to use, how quickly it executes, how reliable it is, and how well it behaves when unexpected conditions arise. The nonfunctional requirements define these aspects about the system. (The nonfunctional requirements are sometimes referred to as “nonbehavioral requirements” or “software quality attributes.”)

– Andrew Stellman & Jennifer Greene, Applied Software Project Management, chapter 6 (O’Reilly 2005)

And that’s a good starting point. But there’s an art to actually using nonfunctional requirements to make your software better. So how do we do that?

Thinking “how well” from the start

One of those senior architects I mentioned gave me a really good tip recently, one that really rings true. He told me, “Always think about performance from day one of your project, and test for it until you deliver.” Now, this particular person works on software tools used to design high-availability, high-performance server systems, so he thinks about performance a lot. But his point was that to design systems well, you need to think about performance — and other nonfunctional requirements — from the start.

Take a minute and think about that, because I think it’s an important point. I like it a lot for two reasons.

I like the fact that he’s thinking about how well the software works from the beginning of the project. I’m a firm believer in the old QA saying that “you can’t test quality in.” Yes, I know that saying rubs some people the wrong way because they think it sometimes lets people off the hook for testing at the end of the project. But there’s definitely a lot of truth in the idea that developers who think about quality from the beginning of the project build better software. If you design for performance, and if you then code for performance, then it’s pretty likely that you’ll end up with a more performant design than if only start thinking about performance at the very end of the project.

The other thing I like is that he didn’t say, “Think about performance, scalability, usability, robustness, etc., from the beginning of the project.” He narrowed it down to the single quality attribute that was most important to his particular project. I’ve talked to a lot of developers, project managers, designers, testers and business analysts over the years about nunfunctional requirements, and what I often find is that people seem overwhelmed. There are so many facets to quality beyond what the software does, and if you’re just trying to get started thinking about these things, it’s hard to know where to start.

Which leads me to my advice for developers. If you’re a programmer working on a project, here’s something that you can do today to improve the final product. Start with just three areas: availability, performance and reliability. I like these three because they’re easy to understand, it’s not hard to brainstorm examples of how they can go wrong.

Start with some definitions. Here are ones that Jenny and I gave in Applied Software Project Management:

Performance: The performance constraints specify the timing characteristics of the software. Certain tasks or features are more time-sensitive than others; the nonfunctional requirements should identify those software functions that have constraints on their performance.

Flexibility: If the organization intends to increase or extend the functionality of the software after it is deployed, that should be planned from the beginning; it influences choices made during the design, development, testing, and deployment of the system.

Reliability: Reliability specifies the capability of the software to maintain its performance over time. Unreliable software fails frequently, and certain tasks are more sensitive to failure (for example, because they cannot be restarted, or because they must be run at a certain time).

Now, make them practical and useful to your project by doing thee simple steps. To do this, you’ll need three index cards. Here’s what to do:

- On the front of each of the three index cards, write one a type of nonfunctional requirement at the top. So on the first card, write “Performance”. On the second one, write “Flexibility”. And on the third one, write “Reliability”. Write these words on the front and the back of the card. If bright colors grab your attention, use a bright-colored highlighter to highlight them. (Personally, they don’t really do anything for me.)

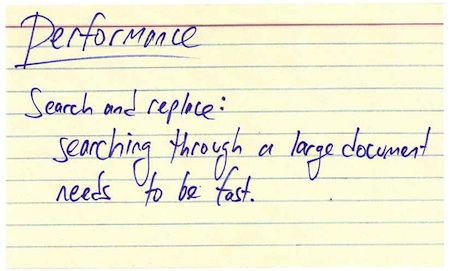

- Take each of the cards. On each of them write down the name of one feature f your software and what this particular attribute means for that feature. I like to use Search and Replace as an example whenever I talk about this sort of thing, because we’ve all used it and understand it. So on the Performance card I might write, “Search and replace: searching through a large document needs to be fast.”

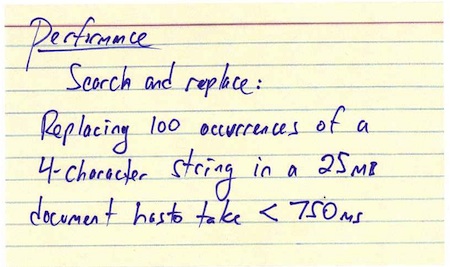

- Here’s the hard part. On the back of each card, write down a single test that you can do to figure out how well your software meets that requirement. So on the back of the performance card I might write, “Replacing 100 occurrences of a 4-character string in a 25MB document has to take under 750 milliseconds.” (The more concrete you can make this test, the better this works.)

Now that you’ve got those three cards, tack them up on your cubicle wall (or, even better, on your task board). Make sure the feature you wrote down in step #2 is facing forward. Make sure they’re someplace you’ll see them. Take just a minute or two each day to look at them and figure out if you’re headed in the right direction. What you’ll find more often than not is that as you’re designing your system, you won’t forget about those three things. Just spending a small amount of time writing down and thinking about these things can color your whole project.

Once you’ve moved from the design phase into the programming phase, flip the cards around so the test side is showing forward. (If you’re on an agile project with a three-week iteration, this might happen during the first week, but this works equally well for projects with a longer design phase.) As you’re writing the code for each of the features you wrote down, run that test by hand. It should only take a few minutes to do, and it will give you an idea of how well you’re doing. If you do this enough, you might end up figuring out a way to automate that test. If you do, and if you have a build server, then you can add it to the build. That way you’ll know any time you check in code that causes your project to see its performance (or reliability, or flexibility) degrade.

Try doing that on your next project, and what you should find is that you spend more time thinking about these things. When opportunities to improve those three specific things come up, you’ll recognize them and take them. And that’s a great way to build better software.